I get a lot of questions from you all. Which I completely adore, even if I cannot answer them all. But I’m consistently reminded that everyone’s questions are slightly different, which reminds me of the value of articulating broader approaches.

Approaches to decision making are one part of this, but there’s also the question of how to incorporate new data into your analysis of the overall picture. I realized that when people ask about this, I talk a lot about “context” and “overall context” and “contextualizing” and “reading in the context of what we already know”. In fact, though, what I mean is: “Use Bayes’ Rule”. So I thought a post which really dives into Bayes’ Rule would be…fun? Is fun the word for that? I think so.

Teaching example for Bayes’ Rule

I use this example all the time. I think I’ve discussed it in every class I teach, regardless of the topic. I sometimes incorporate it into book talks. Once, I asked it to a group of University Trustees (one person got it right).

Here it is:

Imagine you have a disease which affects 1 in 10,000 people. You’ve got a test which detects 99.9% of cases, with just a 2% false positive rate. Someone comes in and they get a positive test. What is the chance they have the disease?

When I give this question, usually people’s answers are in the range of 98%, 99%. Sometimes 95%. People are drawn to the idea that if this is a good test, which detects nearly all cases, then if you have a positive test result, you’re very likely to have it.

The true answer is that if you have a positive test result, your actual chance of having the disease is about 0.5%, or about 1 in 200. So, half of 1 percent. That is, even with a positive test result, you are not that likely to have the disease. (This is called the “posterior probability”).

Why is this?

The key is the false positive rate here, combined with the very low baseline risk. The easiest way to think about it (for me) is the following. Take a sample of 10,000 people. One of them has the disease (on average) and your test is very good — it will almost surely find that one person. 9,999 of them do not have the disease. The test has a 2% false positive rate, which means 2% of those people without the disease will be detected to have it. That’s about 200 people.

The result is that you have (approximately) 201 people who test positive. The one actual positive person, and 200 people who are really negative but where the test was wrong. Of the positive tests, that’s 0.5% who actually have the disease. What seems like — and is! — a small false positive rate amplifies because of the low baseline risk.

To be clear, it isn’t that this test isn’t useful. You’ve learned a huge amount from the positive test, since a risk of 1 in 200 is very different from 1 in 10,000. It’s just not 100%.

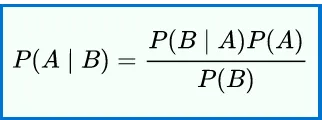

If you want a formal treatment of Bayes’ rule, Wikipedia is helpful. Here’s the quick math:

What does Bayes’ rule do?

Help me understand this better.

This is a fun brain teaser, sure, but it also has implications for how we process new information, for what we learn from it.

Let’s take an example of caffeine in pregnancy. Imagine a new study comes out (an actual new study, with data, not like the one last week) showing that coffee in pregnancy causes miscarriage. Our hypothetical study shows caffeine increases miscarriage, with a result that is “statistically significant”.

How much should we learn from this? It’s going to depend on a few things, all of which go into our calculations above.

- First: the “prior probability”. Before this study was revealed, what did the existing data say about the chance that caffeine causes miscarriage and how confident were we in it?

- Second, the “false positive” rate. This relates to the significance in the paper. If a result is “significant at the 5% level” (i.e. with a p-value of 0.05) one (slightly loose) way to think of this is that there is a 5% false positive rate.

- Third, the “true positive rate”. That is, if the effect is real, what is the chance this paper picks it up? For simplicity, I’m going to ignore this for now and just assume that if there is a real effect, we’d see it.

Let’s start with the importance of the prior probability. Imagine that coming into this study I was really, really sure — like 99.99% sure — that a small amount of caffeine does not cause miscarriage. And now there is a study with a 5% false positive rate, saying that caffeine does cause miscarriage. I do my fancy Bayes’ Rule calculation on that, and what I come out with is: after seeing these data, I am 99.8% sure that a small amount of caffeine doesn’t cause miscarriage. I’m still really, really confident in my original conclusion.

Why?

Basically, because I was so sure beforehand, the addition of this new data makes me a little bit more worried, but only a little bit.

We can contrast with someone who was unsure before — who maybe thought there was a 50% chance that caffeine is dangerous. For them, after they see these new data, they think the chance is 95%. This information moves their estimate a lot, simply because they knew less to begin with.

The second thing that matters is the false positive rate. What if the study were really, really big and really, really convincing — a randomized controlled trial with millions of women which concluded coffee was dangerous in pregnancy. Such a large study would probably have a very high level of significance, a very low “false positive” risk.

We can take the person who is 99.99% sure that caffeine is safe. If they encountered a study with a 0.01% false positive rate, their updated probability would be 50%. They’re still not fully convinced — their prior is so strong — but the more precise data, the lower false positive rate, gets much more weight with them. (For the person who started at 50%, after seeing this good study they’d be all the way at 99.99%).

I’ve framed this in terms of how convinced an individual is, but really I think you can read this as how convincing the baseline data is. When we are very, very sure about something, new evidence shouldn’t move us as much. When we are unsure, it moves us a lot. Similarly, the better the new evidence, the more it should move you. Yes, these points may seem obvious. But I’m not sure we always incorporate them into our thinking.

Where is Bayes’ Rule useful?

I literally use Bayes’ Rule all the time. But in terms of the topics I talk through here, a couple of examples…

New Studies

Much of what we all think about is how to interpret new studies, be they about parenting or pregnancy or, in this moment, COVID. I’ve talked and written a lot about being careful not to over-react to new studies. That’s really a pitch to use Bayes’ rule. When we see a new study, think about (a) what you knew before and (b) how informative this study is. What’s the “false positive” chance you assign to these findings?

When I looked at that new study on caffeine last week, I came into it with a strong prior (based on all of the other information we had) that some caffeine during pregnancy was safe. Combined with the fact that the study was bad (which we could frame as a “high false positive” rate), my updated probability was not very different, or not at all different.

A contrast is something like the ARRIVE trial, on 39 week induction, which I wrote more about here. Coming into reading that study, my sense was the question of whether inductions lead to C-sections was much less settled. The fact that the study was very good, was very convincing, moved my judgment about this link quite a lot.

And, of course, I use this all the time in studies on COVID-19, as I try to take each piece of new data (on kids, on schools, on transmission) and incorporate it in my thinking.

When you read a new study, read it through this lens: what’s your prior, and what’s the false positive rate?

Symptoms and Test Results

The basic observation is that if something is unlikely to begin with, symptoms or test results should be interpreted in that light. Headache is a classic example: if someone had a severe headache, you should not automatically jump to the fear they have a brain tumor (even though severe headache is common with tumors), since this is very, very rare and most serious headaches are not tumor-related.

The other place this comes up is in how we interpret medical information, including both symptoms and test results.

In pregnancy, this arises (for example) in thinking through genetic testing results. Fetal genetic screening has gotten better, but there are still false positives in many of our tests. If you are young, if your baseline risk is low, even a worrisome test result does not necessarily mean the chance of a problem is very high.

Similar things come up with kids. One of my children once failed a hearing test, necessitating a visit for a formal audiological exam. It was hard not to freak out (this is always hard for me) but Jesse pointed out that given everything else we knew (the kid in question talked all the time, had a good vocabulary, her teachers hadn’t complained about it) our prior probability of her having a hearing problem was very low. Given that the tests have high false positive rates, I should calm down. (It was fine).

And it would be remiss not to talk through COVID-19 testing. All of our COVID-19 tests have some false positive rates, including the common PCR nasal swabs. One of the issues which comes up as (for example) Universities do massive asymptomatic surveillance testing is that at least some people identified as COVID-19 cases will be false positives. In a population with low prevalence — which we hope will be the case in these universities — it may be the case that a lot of the positive tests are false positives. Even if you get a positive test result, the actual risk of having COVID-19 may still be low. (Don’t worry if you live around a college: nearly all of them are requiring people to quarantine with a positive test, even if it may be a false one).

Conclusion

Bayes’ Rule is the Best!

(Here is hoping I do not get a lot of emails from statisticians complaining about how I have not explained this in a way they like.)

Community Guidelines