Today’s newsletter is a single-paper deep dive. I’m often reluctant to put this kind of focus on a single paper, especially one that isn’t (say) a large randomized trial. Today’s topic is the impact of screen time on IQ, and it’s an example of a problem where it frequently makes sense to look at the entire literature all at once. Focusing on one result can put overly much weight on just one set of data.

However: I think this particular paper is a useful one to talk through, since the methods are interesting, it illustrates some of the difficulties of addressing this question, and it starts by arguing the opposite of most of the literature.

The paper is here. The title: “The impact of digital media on children’s intelligence while controlling for genetic differences in cognition and socioeconomic background.” The headline finding: Exposure to video games is associated with faster intelligence growth over two years in late elementary school. Social media is neutral, and watching videos is also associated with faster intelligence growth.

The authors make much of the gaming finding especially, even saying early in the paper, “In specific, we had a strong expectation that time spent playing video games would have a positive effect on intelligence.” They theorize that the fast pace of the games pushes children to learn more quickly, or serves as a kind of “cognitive training.” The paper goes so far as to suggest that some of the overall increase in measured IQ over the past century (called the Flynn effect) is a result of video game and screen exposure.

This whole discussion — the statement of initial expectations, the findings, etc. — is really different from most of what the literature finds or focuses on. This is why it’s an interesting deep dive. The TL;DR of the below is that I do not think this paper makes a compelling case that video games are good for cognitive development, any more than other papers make the case that they are bad. Beyond that, it’s revealing about the big-picture issues in asking this question.

The paper and its findings

This paper tackles the question of whether exposure to media, in various forms, impacts measured IQ. The researchers approach this problem by using a sample of almost 10,000 children ages 9 and 10 who were studied in 2015 as part of the ABCD Study in the U.S. Children in the study were surveyed and asked about, among other things, their media consumption. Measures of IQ were collected both at baseline and, for about 5,000 of the children, at a two-year follow-up.

The basic approach in this paper is similar to that in much of the literature. The authors look at the relationship between IQ and reported media consumption. They differentiate among gaming, television watching, and social media.

When doing this analysis, the goal is to identify causal relationships in the data. This can be challenging. The first reason this is a hard problem is one I talk about a lot in the newsletter: differentiating correlation and causality. Media consumption habits differ across children for many reasons, and it’s hard to know if any differences in outcomes are due to media or to these other differences. This is true in the data used in this paper, too.

A central element of any paper of this type, at this point, is what approach the researchers will take to deal with these causality concerns. The most basic approach is to add more control variables — to adjust for more differences across family types. The authors here add two elements that they feel improve their inference: “polygenic scores” and exploiting changes over time.

The first innovation: the polygenic scores. Children in the study had genetic sequencing done. The authors use these genetic results to construct a genetic score that is predictive of IQ. They rely on existing published data that has shown some correlation between particular genetic polymorphisms and measured IQ — together, these “genetic scores” explain perhaps 10% of IQ variation. The authors’ argument is that by controlling for these, they are better able to adjust for possible confounding variation across children. Effectively, they see their analysis as calculating the impact of screen exposure on measured IQ, controlling for genetically predisposed IQ.

This is a cool idea, although it’s easy to overstate how valuable it is for identifying causal relationships. Two people with the same genetic score are not “identical,” so this isn’t equivalent to randomization. In my view, this factor improves the quality of their controls but does not fix causality issues.

The second innovation: changes over time. The primary analysis in the paper asks whether exposure to screens impacts the change in IQ from baseline to two-year follow-up. Their argument is that this effectively holds individual characteristics constant and allows them to isolate the impact of screen time.

I think this approach is confused. First, it is not at all clear why the causality issues that plague the analysis of IQ levels are made better in changes. The amount of screen exposure between the ages of 9 and 11 is also correlated with other aspects of family that probably affect learning over this period.

Second, this ignores any dynamic effects. Imagine (this is hypothetical) that screen time between the ages of 3 and 9 is very damaging but that between the ages of 9 and 11 it’s not as bad. The kids who have a lot of screen time between 9 and 11 very likely also had a lot of screen time between 3 and 9. But if the screen time is getting relatively less damaging, you would expect to see them catch up in test scores between 9 and 11. But not because screens are good! Looking at changes in test scores can make our estimates more precise, but they do not fix the causality problem in this case.

Digging into this paper, the most compelling result for me is the finding — toward the end — where the authors adjust for sibling fixed effects. Here, they are looking within families at differences in exposure and test scores. This goes much further toward controlling for family background than the polygenic scores. In that analysis, they do not observe any effect, positive or negative.

For me, that’s the takeaway from the data. I am not compelled by the arguments that gaming increases IQ. I find the sibling analysis, which shows no impact, to be the most compelling. I will say, apropos of where I started this post, that some of that feeling is informed by what I know from other papers. Generally, the effects of screen time on kids seem to be largely neutral.

Conceptual issues writ large

The above analysis is standard debunking. This is correlation and not causality, sibling fixed effects do it better, and so on. All perhaps useful if your instinct was to go out and buy a video game console to help your child achieve better in school.

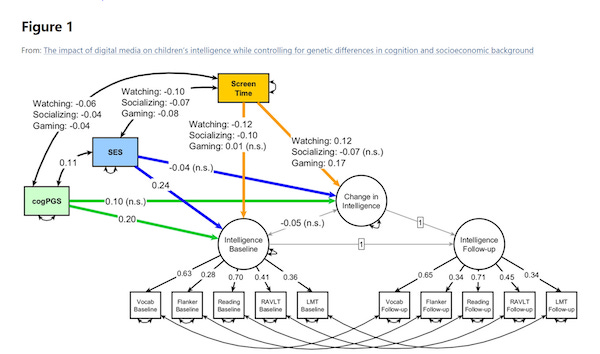

But what I found more instructive about this paper is how it made me think about the difficulty of making any progress at all on these issues. One reason is that it highlights the tremendous interconnectedness here. The authors include a helpful (?) diagram illustrating how they see the mechanisms, which I’ve included below. Their interest in the paper is on identifying the impacts of screen time on the change in intelligence (their yellow lines). But simultaneously there are a million other things going on.

Baseline intelligence is impacting the change. Screen time is impacting the baseline. Socioeconomic status is impacting screen time and being impacted by screen time (that last is a bit unclear). Screen time is impacting itself (I am not precisely clear on that one either). It seems likely that these pathways all intersect. Not even considered here are the issues associated with selection in studies like this: the populations being studied may be different than the average. The bottom line is that identifying any one of these pathways, and separating it from the others, is just hugely difficult to think about.

The second conceptual issue is about alternative uses of time. I find it impossible to think about the impacts of gaming or TV watching or social media without thinking about what they crowd out. In the paper, the authors talk about the crowding out between these items. As in: maybe gaming looks good because it crowds out social media. But if we step back, we realize that any screen time here must crowd out something.

I’ve said this many times before, but it bears repeating that probably the biggest consideration with screen time is simply what else a person could be doing (more here). “Is video game playing good or bad?” is ill-defined without saying clearly what else someone would be doing with that hour or two.

In the end, this last point makes me pessimistic about ever answering with research the screen-time questions parents have. Even a randomized trial of particular types of screen time, or access to screen time, is going to be fraught if we do not understand well what the time is substituting for. Many of these choices are going to need to be made by relying on what we think works well for our family and, honestly, relying on our gut. Without much help from the data. Sometimes that’s just the reality.

Community Guidelines

To continue, an upgrade is required. Would you like to upgrade your plan now?